How autonomous can driverless cars be if they are programmed by humans, who have inherent biases? This article discusses this and other issues that society will have to grapple with if autonomous vehicles are to become the norm.

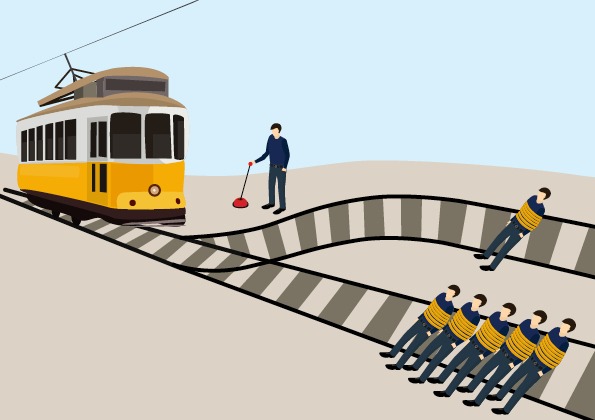

A runaway train trolley is quickly heading towards five people who are tied to the tracks. You can save them by pulling on a lever, which diverts the trolley towards only one other person instead. Would you pull the lever? Known famously as the Trolley Problem, this thought experiment was developed by philosopher Philippa Foot to examine such moral dilemmas.

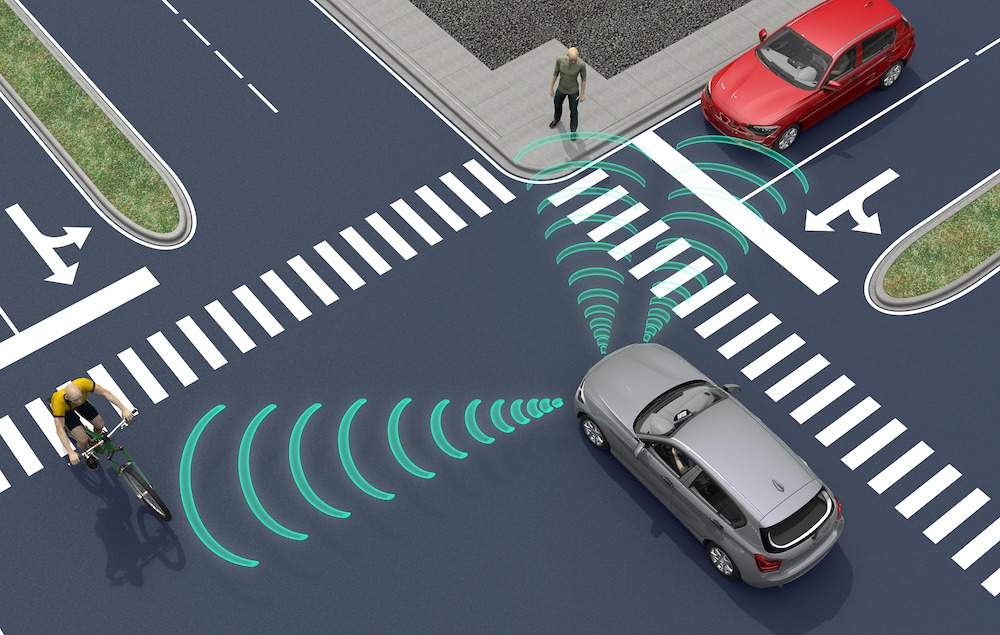

The world’s biggest companies — Google, Tesla, Ford, Uber — are working hard to be the first to unleash their driverless vehicles on public roads. While some of us are looking forward to artificial intelligence and robot cars in the not-so-distant future, the autonomous automobile sector has had to face the kind of decision-making dilemma described earlier — but involving a lot more than just comparing the number of potential casualties.

Take for instance whether a car should either run over a group of kids, or plunge off a cliff and killing its passengers. If a human driver had been behind the wheel, his split-second decision to save himself would be viewed as an instinctive reaction with no malicious intent. But if a self-driving car were to be programmed to react the same way, the incident could be viewed as premeditated homicide.

Who decides how autonomous vehicles should respond?

This inevitably raises the question: who decides on the algorithms that guide self-driving vehicles? Should engineers or car-makers determinehow self-driving cars ‘think’? Although self-driving cars are designed to eliminate human error — which, in theory, should reduce the number of road accidents — they are inherently designed to systematically favour or target specific types of road users to crash into. Innocent road users will, in turn, bear the brunt of these pre-set algorithms.

As it turns out, it is possible for humankind to reach some kind of consensus. A global survey by the Massachusetts Institute of Technology (MIT) revealed that human lives generally take precedence over cats and dogs, and that the lives of the many outweigh the lives of the few. Unlike their counterparts in the West, Asian respondents — including those from Singapore — largely agreed to save the elderly rather than the young. Respondents from China and Japan also differed on their opinion on whether pedestrians or passengers should be saved.

Let us assume for a minute that engineers and car-makers could offload such ethical dilemmas to consumers instead. Would you pick a car that would save lives at all cost, or a car that would prioritise your life?

The question of liability

Another thorny issue relates to liability: who is to blame if a driverless car breaks the law?

In March 2018, a female pedestrian in Arizona, USA, was struck and killed by a self-driving Uber vehicle while walking her bike across the road. This was believed to be the first pedestrian death associated with self-driving technology. While there were no passengers aboard the car, investigations revealed that there was a human safety operator behind the wheel who was watching a show on her phone in the minutes leading up to the crash. To date, however, prosecutors in Arizona have been unable to take Uber to court.

At the heart of this incident are questions regarding the safety of self-driving technology, and the comprehensiveness of regulations governing it. Instead of adopting a wait-and-see approach, governments around the world are urged to anticipate and be prepared for the eventual deployment of self-driving cars and take a prospective approach — which usually has fewer potential sources of bias — in policy making.

Fundamental assumptions

Let us also take a step back from these engineering and ethical problems, and reflect on the fundamental assumptions we have been making.

Given that self-driving vehicles are ‘smarter’ than human drivers, why should these vehicles be designed to conform to a human-designed traffic system? If we could revamp all our roads, how might they look like?

As we journey towards a driverless future, it is inevitable that society stress tests its instincts against such ethical thought experiments. However, with self-driving cars being potentially safer and more reliable than human drivers, focusing on moral dilemmas alone may distract policymakers from ensuring a safe transition and derail our progress into a brave new world.